Wichita has bought one robot dog, which is either the plainest sentence in municipal government or the beginning of a very useful argument.

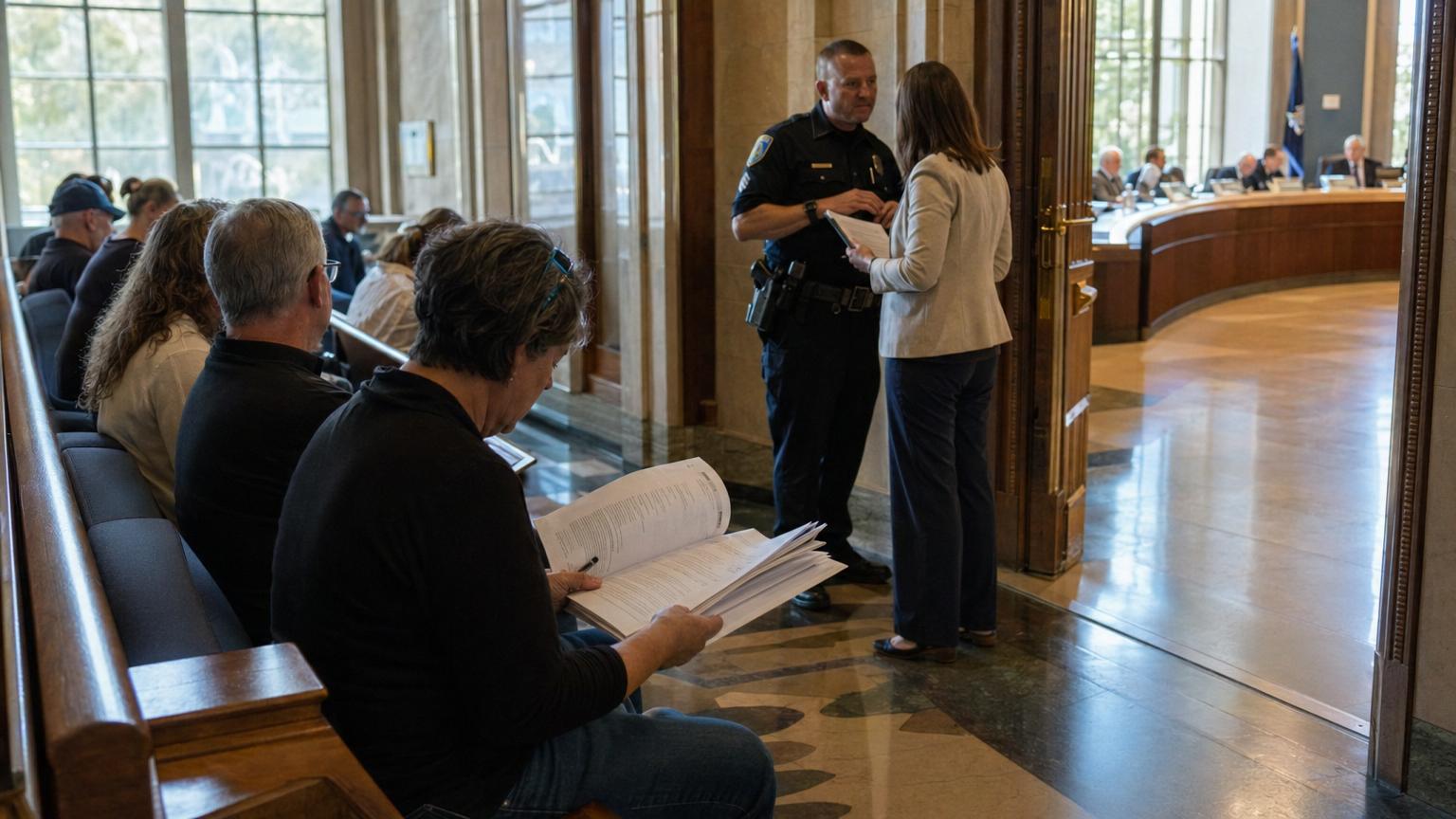

On April 7, the Wichita City Council voted 4-3 to let the Wichita Police Department buy a single SPOT robotic dog from RADeCO, Inc., a company that sells and trains public-safety users on Boston Dynamics’ four-legged robot platform. The city’s recap put the amended cost at $340,000. The department’s earlier request was for two robots, one for the bomb squad and one for SWAT, with a two-unit price listed in council materials and local reporting at $629,647.56. Instead, Wichita approved one machine, directed the department to work with that, and required the issue to come back to the council on July 7, 2026, with data and a policy update before any decision on a second robot.

That is the sturdy civic peg. The stranger part is the object itself. Spot is not a police dog in the warm, slobbery sense. It is a quadruped robot: a 74-pound industrial machine with cameras, batteries, software, joints, sensors and a tablet controller, built to move through places where wheels and tracks may struggle. Boston Dynamics markets Spot for factories, construction sites, utilities, mines, government agencies and emergency responders. With public-safety add-ons, the robot can be outfitted with a manipulator arm, cameras, a radio kit, thermal imaging, two-way communications and sensors for dangerous materials. Wichita police described a configuration that could open doors, climb stairs, provide live video, communicate with a person inside a high-risk scene, and help assess chemical, biological, radiological, nuclear or explosive hazards, often shortened to CBRNE.

The vote was close because the stakes are not a joke, even if the machine looks like the kind of thing a child would name before a parent could stop them. A robot that can walk into a barricaded house may keep an officer, a bomb technician, a bystander or a person in crisis alive. A robot that can walk into a house with cameras also raises a hard public question: what exactly has entered the home, under what rule, and who gets to see what it saw?

That is why Wichita’s one robot dog is bigger than Wichita. A local budget line has wandered into a national fight over police technology, public trust and whether cities can write rules quickly enough for machines that move faster than the meeting calendar.

One dog, two jobs

The Wichita Police Department’s case was simple in outline: the city already uses robots, but the robots it has cannot do everything the department now wants from a machine. Traditional bomb-squad robots often move on tracks or wheels. They can be powerful and stable, but they do not love stairs, narrow corners, doorway lips, piles of clothing, boxes, furniture, loose clutter or all the other little obstacles that make a real house different from a demonstration floor.

Captain Jason Cooley, presenting for Wichita police, gave council members an example from the day before the April 7 meeting. According to the council minutes, he said police had information that a wanted person in a shooting case was inside a residence. Officers used ordinary de-escalation steps, then tried to send in robotics. The larger tracked robot had trouble in a cluttered house. A drone could only go so far. Officers ultimately had to move forward more slowly with a smaller handheld robot. Cooley also acknowledged a point that mattered to skeptical council members: the department did not keep a statistic showing how often an existing robot failed at a task that Spot might have handled. He told the council the department would start collecting that data.

That admission made the vote more interesting. Wichita did not approve the robot after a clean spreadsheet proved the machine would prevent a certain number of injuries, save a certain number of dollars, or end a certain number of standoffs peacefully. The council acted on police testimony, examples from other jurisdictions, public worry, council judgment and a promise of future data. That is often how cities buy new technology. It is also why residents get nervous.

The approved robot is supposed to serve needs that were originally split between two units. Bomb squads deal with suspicious packages, explosive devices, old ordnance, unstable materials and hazardous scenes where distance is survival. SWAT teams handle high-risk warrants, barricaded people, hostage situations and armed confrontations. In both settings, the robot’s sales pitch is distance: send the machine into danger before sending a person.

Police supporters at the meeting framed the purchase as a life-safety tool, not a toy. A public commenter cited SWAT activations and argued that the robot could help officers gather information before deciding what force, if any, might be needed. Council supporters talked about officer safety and community safety. Wichita police said the robot would not require new staffing. Local reporting by KWCH described Spot as designed by Boston Dynamics, priced at $340,000 for Wichita’s approved package, and intended for de-escalation and difficult entry tasks.

Opponents and skeptics did not all reject the idea that robots can be useful. Their sharper question was whether the city had done enough homework before signing the check. Council members and residents raised cost, deferred maintenance, fire-station needs, stormwater and sanitation problems, recording practices, public access to footage, future operating costs and whether one costly machine would quietly normalize a broader surveillance system. Wichita has also been debating automated license plate readers, drones and other police technologies. That made the robot dog feel less like a single gadget and more like one metal paw in a much larger footprint.

Costs do not bark

The price did real political work. Wichita’s capital-improvement planning documents had already included money for police robotic dogs, and the April 7 vote authorized bond financing for the amended purchase. General obligation bonds are borrowed money backed by the city’s taxing power. That does not make a purchase irresponsible by itself; cities borrow for long-lived equipment and infrastructure all the time. But it does mean the cost is not imaginary. The council was not voting on a free sample from the future.

During the April 7 discussion, officials explained why the price of a police-ready Spot is far above the number residents may find online for a base robot. A bare platform is not the same as a public-safety package. Add an arm. Add a stronger radio link. Add a pan-tilt-zoom camera and thermal camera. Add sensors. Add training. Add warranty and support. The robot starts to look less like a dog and more like a small walking equipment room.

That explanation can be true and still leave residents with fair questions. What will the annual maintenance cost be after the first year? How many deployments will justify the price? How often will the robot be used for bomb calls, and how often for SWAT entries? Will it be available to other agencies through mutual aid, and if so, under whose policy? If the robot breaks during a regional call, who pays? If it records inside a home, how long is that recording kept? If it is used in a case that later goes to court, what must be disclosed?

Wichita’s compromise was to buy one and demand a report before buying another. That is a very city-council kind of answer. It neither slams the door on the technology nor throws the kennel open. It creates a deadline, July 7, and a test: can the department show not only that the robot is capable, but that it is governed?

The word governed matters. New technology often arrives wrapped in the language of emergency. Emergencies are real. Bomb technicians really do face danger. SWAT officers really do enter volatile rooms. People in crisis really do sometimes need time, distance and communication rather than a rushed human confrontation. But equipment bought for rare danger can drift into ordinary use if the policy is vague. A tool that starts as a way to inspect a bomb can become a way to search a backyard, patrol an event, check a house, accompany a warrant team, or reassure the public at a festival. Some of those uses may be reasonable. Some may not. The difference is the rulebook.

Robot dogs already have a reputation

Wichita is entering a story that other cities have already made messy.

New York’s robot dog became famous as Digidog, a name that somehow made the machine less cuddly. The New York Police Department first used Boston Dynamics’ robot in 2020, then ended an early contract after public backlash. Mayor Eric Adams later brought the concept back as part of a broader technology rollout. New York also has the Public Oversight of Surveillance Technology Act, known as the POST Act, which requires the NYPD to publish impact and use policies for surveillance technologies. But in 2024, New York City’s Department of Investigation criticized the NYPD’s compliance, saying the department’s practice of grouping distinct technologies under broad policies could limit public scrutiny. The department’s POST Act page now includes separate entries for remote-controlled tactical robots, throwbots and submersible remotely operated vehicles, a sign of how oversight categories can change after pressure.

Los Angeles had its own fight in 2023, when the City Council voted 8-4 to accept a donated Spot for the LAPD after months of criticism. Supporters said it would be used only in limited high-risk situations requiring SWAT. Critics worried about surveillance, militarization, cost after the donation, and harm to Black and Latino communities. The vote passed, but not because the controversy disappeared. It passed with quarterly reporting requirements attached.

San Francisco went even deeper into the thicket. In 2022, as part of a California law requiring local approval of policies for certain law-enforcement equipment, supervisors debated whether police robots could be used for deadly force in extreme circumstances. The board first approved language allowing potentially lethal use under narrow conditions, then reversed course after public backlash and temporarily blocked that use while sending the issue back for more review. The point was not that every robot dog is a killer robot. The point was that once a city owns remote-controlled machines, it has to decide, in writing and in public, what uses are forbidden before a crisis invites improvisation.

Then there is the Massachusetts example that police departments cite for the strongest safety argument. In March 2024, a Massachusetts State Police robot dog named Roscoe entered a Barnstable home during a barricade situation. Police said the robot found an armed person in the basement and was shot three times. Boston Dynamics said it was the first time one of its Spot robots had been shot. Supporters saw the case as proof of concept: bullets hit a machine instead of a person. Critics did not necessarily deny that benefit. They asked what rules should surround a technology dramatic enough to change tactical decisions.

The oldest cautionary tale in this debate is not a dog at all. In 2016, Dallas police used a bomb-disposal robot carrying an explosive to kill Micah Johnson, who had murdered five police officers. The Texas Tribune reported at the time that experts described the Dallas action as the first known time U.S. police had used a robot to kill someone. The Dallas case was an extreme event, not a routine policy choice. But it still changed the debate. It showed that a robot designed for distance and safety can be adapted under pressure into a tool of force.

Boston Dynamics says its terms prohibit using Spot to harm or intimidate people or animals, using it as a weapon, or configuring it to enable a weapon. That matters. It also has limits. A manufacturer’s contract can void a warranty, restrict service or terminate software access. A city policy has to protect residents, direct officers, survive leadership changes, tell courts what happened and give the public a way to see whether the promise was kept.

The weirdness is useful

Robot dogs trigger a reaction that plain cameras do not. That reaction is not always rational, but it is not useless.

A camera on a pole can disappear into the background. A software contract can hide in an agenda attachment. A drone can become a distant buzz. A four-legged robot walking down a hallway is impossible to treat as ordinary. It looks like a visitor from a movie wandering into the purchasing department. People laugh, flinch, make jokes, take videos, call it dystopian, call it lifesaving, call it a toy, call it the future. The shape forces attention.

That attention can be healthy for democracy. Police technology is often adopted piece by piece: a camera network here, a drone policy there, a data-sharing agreement, a predictive tool, an acoustic sensor, a new database, a robot. Each item can be defended as limited and practical. Together they can change how residents experience public space and private homes. A robot dog is strange enough to make people ask the bigger question in plain language: how much machine presence do we want in policing, and who decides?

The answer cannot be simply no machines. Bomb squads have used robots for decades because humans are breakable and explosions are not forgiving. The National Institute of Justice was publishing work on better bomb-disposal robots in 2004, long before Spot became a public-safety symbol. Robots can inspect suspicious packages, carry phones, open doors, look around corners and enter hazardous places. If a machine can give negotiators more time, that can help the person inside the crisis as well as the officers outside it.

The answer also cannot be simply trust us. Trust is not a policy. It is what can grow after a policy is clear, followed and audited. The public should not have to wait for a scandal to learn when a robot may enter a home, whether it records, who can access footage, whether facial recognition or other analytics are attached, whether the robot may be used at protests, whether it may carry less-lethal tools, whether it may carry anything that can hurt a person, whether outside agencies may borrow it, and how mistakes are reported.

Boston Dynamics’ own materials recognize some of this tension. The company markets Spot for public-safety uses that keep responders out of harm’s way, and it says Spot is not designed for mass surveillance or to replace police officers. It also says it will not authorize partners who want to use its robots in ways that violate privacy or civil-rights laws. Those commitments are relevant, but they are not a substitute for local democratic control. A vendor can describe intended use. A city must define permitted use.

Policy should be boring on purpose

A good robot-dog policy would be less exciting than the robot. That is the point.

It should define approved uses. Bomb disposal, hazardous-materials checks, search and rescue, barricaded-person communication and high-risk scene assessment are different from routine patrol or crowd monitoring. If routine patrol is forbidden, say so. If event demonstrations are allowed, say whether recording is off. If mutual aid is allowed, say whose rules apply.

It should define forbidden uses. No weapon attachments. No delivery of explosives. No use to intimidate. No facial recognition unless separately approved, if the city ever considers it. No autonomous decisions about force. No quiet expansion into code enforcement, protest monitoring or general surveillance without a public vote. If exceptions exist for imminent threats, write them narrowly enough that residents can understand them before fear fills the room.

It should cover recording. The April 7 council discussion touched on whether Spot’s camera could stream or record, and whether footage could be stored locally rather than in a cloud system. Cooley told council members the robot is designed to provide a live feed and that recording would not operate like a body camera unless an operator screen-recorded or used local storage. Council members then pressed for clarity, with some saying recording could be important for transparency and evidence. Those are not technical footnotes. If the robot goes into a home, it may capture children, bedrooms, medical equipment, religious objects, private documents, arguments, fear and confusion. The city needs retention periods, access rules, audit trails, redaction procedures and disclosure standards.

It should count deployments in a way that answers the real question. A tally that says the robot was deployed 12 times is not enough. The report should say what kind of incident it was, whether the robot entered private property, whether it recorded, whether a warrant or exigent circumstance was involved, whether it communicated with a person, whether it carried sensors, whether any force followed, whether it failed, whether it was damaged, whether a complaint was filed, and what human risk it arguably reduced.

It should include cost per use without pretending that cost per use settles everything. A fire truck may sit quietly for days and still be worth owning. A bomb robot may justify its price in one terrible afternoon. But if Wichita is choosing among fire-station repairs, water systems, transit, housing, parks and police equipment, residents deserve a clear view of what the machine costs after purchase.

It should also explain training. A robot that can open doors and climb stairs is only as good as the operators, supervisors and commanders who decide when to send it. RADeCO’s public training page describes Spot instruction that includes general operation, safety, stairs, obstacle avoidance, arm manipulation, AutoWalk missions and payload interfaces. Wichita’s policy question is not only whether operators can drive the robot. It is whether they know when not to.

July will tell the story

For now, Wichita has one approved robot dog and many unanswered questions. That is not a scandal by itself. It is a hinge.

The generous reading is that the council found a cautious middle. It listened to police, recognized a plausible safety need, trimmed the purchase in half, and required a return date. If the department uses the next two months to write a strong public policy and collect meaningful data, Wichita could become a smaller-city model for how to adopt odd, powerful tools without waving away civil liberties.

The skeptical reading is that the city bought first and will define later. That order worries people because technology tends to become sticky once purchased. Staff train on it. Officers rely on it. Vendors support it. A department builds plans around it. By the time the public asks whether the tool should exist, the answer becomes: it already does.

Both readings can be partly true. Wichita did not buy two. Wichita did buy one. The council did demand a policy update. The robot will likely arrive before most residents have absorbed what such a policy should contain. This is how the future often shows up in local government: not as a thunderclap, but as an agenda item after lunch.

The most humane way to think about the robot is to keep everyone in the scene. Picture the officer outside a dark hallway who does not want to be shot. Picture the person inside the house who may be armed, terrified, suicidal, confused or dangerous. Picture the neighbor peering through blinds. Picture the bomb technician with a family. Picture the resident who has watched too many police technologies arrive with too few rules. Picture the council member trying to choose between a machine that might save a life and a roof, station, bus or pipe that also might save lives in quieter ways.

A robot dog does not solve that conflict. It carries it on four mechanical legs.

Wichita’s July 7 meeting, if the city treats it seriously, should be less about whether Spot is creepy or cute and more about whether Wichita can make a public promise precise enough to matter. The machine can climb stairs. The harder climb is civic: from fascination to rules, from rules to records, from records to trust.

Until then, the city has one robot dog. It also has one very useful deadline.