The AI debate has many frameworks and one recurring absence

The public argument about artificial intelligence is crowded with nouns. Safety. alignment. innovation. regulation. risk. competitiveness. productivity. Ethics. Those words matter, and most of them describe genuine pressures. Governments want economic advantage. companies want speed. workers want protection. educators want guidance. journalists want verification. Courts and regulators want standards sturdy enough to survive contact with actual cases. Yet in the middle of all that policy vocabulary, one old philosophical term keeps trying to return: judgment. The missing word in much of the AI debate is not intelligence, because the systems already produce outputs. The missing word is judgment, because institutions still have to decide what those outputs mean, whether they should be trusted, who can be harmed by them, and when a technically functioning system is still producing an unacceptable public result.[1][2][3][4]

That absence matters more now because the governance field is no longer empty. The OECD’s AI Principles were the first intergovernmental standard on AI and were updated in 2024 to reflect a changed landscape. UNESCO’s Recommendation on the Ethics of Artificial Intelligence, adopted in 2021, applies to all 194 member states and places human rights and human dignity at the center of its approach. NIST’s AI Risk Management Framework is already available as a voluntary resource, and the NIST AI Resource Center exists specifically to help organizations operationalize and test it as the framework is revised and expanded, including through resources for generative AI. In other words, the problem is not that society has failed to produce checklists, principles, or governance scaffolding. It is that none of those tools removes the need for people and institutions to judge well.[1][2][3][4]

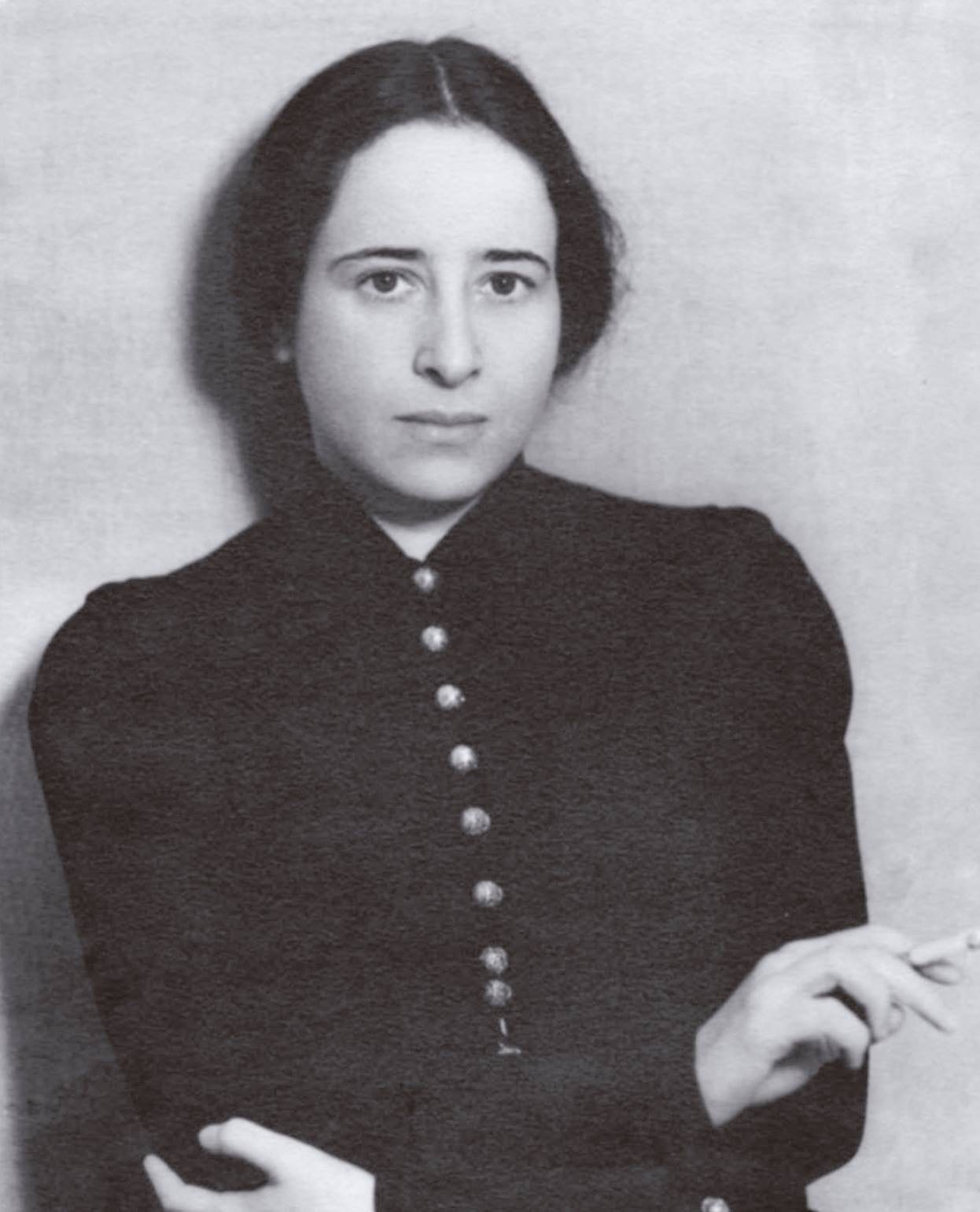

That is where Hannah Arendt becomes unexpectedly useful. Arendt was not writing about machine learning systems or frontier models. She was writing about politics, responsibility, plurality, and the conditions under which human beings stop thinking carefully enough about what they are doing. The reason she belongs in the AI conversation is not that she supplies instant regulatory answers. She does not. The reason she matters is that she helps name the human capacity current governance language often assumes but rarely examines: the ability to think in a way that loosens cliché and then judge particular cases from the standpoint of a shared world rather than from the comfort of procedure alone.[5]

Principles are necessary. They are not the same thing as judgment.

The major AI frameworks already point toward values most people would recognize as essential. OECD says AI should be innovative and trustworthy while respecting human rights and democratic values, and explicitly acknowledges current risks including disinformation, data insecurity, and copyright infringement. UNESCO’s recommendation speaks in the language of rights, dignity, fairness, transparency, and human oversight. NIST’s framework is designed to help organizations govern, map, measure, and manage AI risk across the life cycle of systems. None of this is trivial. It is the backbone of responsible governance, and it is far better to have that backbone than to pretend AI can be left entirely to improvisation.[1][2][3][4]

But frameworks do not decide cases by themselves. A policy can say an organization values transparency; judgment has to decide what transparency requires in a hiring tool, a benefits triage system, a newsroom summarizer, or a school-facing chatbot. A framework can say human oversight matters; judgment has to decide when a human reviewer has enough context and authority to meaningfully intervene rather than merely rubber-stamp. A standard can say risks should be measured; judgment has to decide which harms are salient, which stakeholders are missing, and what to do when something measurable is still morally or politically unacceptable.[1][2][3]

This is why so much AI governance debate can feel as though it oscillates between grand moral language and narrow technical procedure. Principles without operational detail turn airy. Metrics without public reasoning turn brittle. Judgment is the bridge between them. It is the capacity that asks not only whether a system met its benchmark, but whether the benchmark captured what a public institution owed to the people living under its decisions. That is not sentimentality. It is governance.[1][2][3][4]

Arendt’s useful distinction is between thinking and judging

The Stanford Encyclopedia of Philosophy’s account of Arendt helps here because it emphasizes something subtler than “have opinions.” For Arendt, thinking is the activity that unsettles fixed formulas and interrupts the ease with which people repeat ready-made language. Judgment, by contrast, concerns the evaluation of particulars in the absence of prepackaged universal rules that do all the work for us. The point is not that rules are worthless. The point is that the world keeps producing cases whose meaning cannot be settled by a formula alone.[5]

Arendt also develops a concept often translated as “enlarged mentality” or described as representative thinking: the effort to see from the perspective of others who share a common world. That does not require abandoning one’s own standpoint. It requires expanding it. Judgment, on this view, is not merely private taste or bureaucratic box-checking. It is a public faculty. It asks whether one can consider how a matter appears to those who are differently situated yet equally present in the world one is shaping. In Arendt’s vocabulary, that is part of what makes political judgment possible rather than merely administrative reaction.[5]

Read that slowly and it becomes immediately relevant to AI. Most contested AI deployments are not hard because nobody can write down a principle. They are hard because institutions must decide how a system appears and operates from the standpoint of multiple affected groups at once. Designers see usefulness. managers see efficiency. lawyers see liability. users see convenience. people subject to error or exclusion see something else. Judgment is the practice of holding those standpoints in view without pretending they collapse into a single frictionless metric.[2][4][5]

AI systems produce outputs. Institutions still own decisions.

That distinction sounds obvious until one watches real organizations use AI. A model can rank candidates, summarize case files, flag anomalous transactions, generate classroom materials, or draft a news brief. In each case the tempting story is that the machine has made part of human judgment unnecessary. Very often what it has done instead is move judgment to a different layer, where it becomes easier to ignore because the output arrives with technical polish. Someone still had to choose the task, the training data, the threshold for action, the escalation path, the appeal process, the retention rules, and the point at which a person can override the system. None of that is automatic. All of it is judgment-bearing work.[1][2][3]

NIST’s framework is helpful precisely because it does not treat AI as a pure engineering object. It treats risk management as a socio-technical exercise involving context, measurement, governance, and ongoing management. That is a quiet admission of something important: good AI governance is not only about model performance. It is about institutions understanding what they are doing when they place models inside systems of work, authority, and accountability. Operationalization, testing, evaluation, verification, and validation all matter. They still do not answer every question that arises when a system’s acceptable statistical behavior produces unacceptable lived consequences.[1][2]

That is where judgment should enter explicitly rather than accidentally. Instead of pretending that human oversight exists whenever a person remains somewhere in the workflow, organizations should ask whether the human role is genuinely interpretive, contestable, and responsible. Can the reviewer understand the basis of the recommendation? Can they depart from it? Are affected people told enough to challenge the result? Has anyone designed the process from the perspective of those who bear the cost of error? These are not decorative questions appended to a technical system. They are questions about whether the system belongs in a democratic institution at all.[1][2][3][4][5]

Representative thinking is a governance method, not a literary flourish

One of the most practical things Arendt offers the AI debate is a way to think about perspective-taking without reducing it to branding language. When UNESCO centers human dignity and rights, or OECD emphasizes democratic values, both are implicitly saying that AI cannot be governed only from the standpoint of builders and buyers. The relevant standpoint is broader. It includes people who are sorted, scored, surveilled, translated, recommended to, summarized, and sometimes silently mischaracterized by these systems. Representative thinking asks decision makers to include those standpoints before harm becomes scandal.[3][4][5]

In practice, that means governance processes should be designed to surface plurality rather than suppress it. Procurement reviews should involve the people who understand operational constraints and the people likely to experience error. Testing should include not only benchmark performance but context-specific stress cases. Documentation should preserve not only what a model can do but what trade-offs were accepted in adopting it. Appeals and redress should not be afterthoughts. These are procedural implications of a philosophical point: judgment improves when institutions refuse to treat one internal perspective as the whole of reality.[1][2][3][4][5]

The popular version of AI governance often imagines a choice between speed and caution, as though the only question were how many brakes innovation can tolerate. Representative thinking reframes the issue. The real question is whose reality is counted when systems are deployed at speed. If the benefits are internalized by organizations while the harms are externalized onto workers, students, patients, customers, or citizens who cannot meaningfully contest an error, the institution has not governed well simply because it moved fast. It has confused velocity with judgment.[2][3][4][5]

The most dangerous failure mode is procedural numbness

Arendt’s work is often invoked through the phrase “the banality of evil,” sometimes too loosely. The more useful warning for AI governance is narrower and less theatrical. Arendt argued that grave political failure can be accompanied by an inability or unwillingness to think from the standpoint of others and to judge what one is actually participating in. That warning need not be inflated into melodrama to matter. Modern institutions already know how to let responsibility dissolve into workflow. Add a polished model output and it becomes even easier to say, implicitly, “the system recommended it,” as though that settled the moral and civic question.[5]

This is not an argument that AI systems are equivalent to the historical catastrophes Arendt analyzed. They are not, and loose analogy would only flatten both subjects. It is an argument that bureaucratic environments are always vulnerable to a certain moral anesthesia. People become fluent in process and less attentive to meaning. Judgment decays not because nobody has values, but because nobody pauses long enough to interpret a particular case outside the grammar of efficiency. That danger is already visible in institutions tempted to treat accuracy dashboards as substitutes for accountability or “human in the loop” as a synonym for legitimacy.[1][2][5]

Once that numbness takes hold, governance language can become self-protective rather than self-critical. Audits turn into rituals. documentation becomes a shield rather than a learning tool. oversight exists on paper while affected people experience opacity in practice. The cure is not anti-technology romanticism. It is a more honest account of what technology cannot remove from public life: the need for people to judge, answer, revise, and sometimes refuse.[1][2][3][4][5]

What judgment would look like in AI governance

If organizations took judgment seriously, the change would not begin with grand declarations. It would begin with recurring questions. Who is exposed to this system’s error and in what ways? Which perspectives were absent when we defined acceptable performance? What evidence would tell us the system is technically functioning but publicly failing? Under what conditions must a person review, reverse, or stop the system altogether? Where can affected people contest a result, and who is obligated to answer them? Those are judgment questions because they force institutions to relate technical tools to a shared world rather than to an internal dashboard alone.[1][2][3][4][5]

In that sense, the future of AI governance may depend less on finding a final master principle than on cultivating better institutional habits of interpretation. NIST can continue refining operational resources. UNESCO and OECD can continue articulating international norms. Legislatures and regulators can continue building formal obligations. All of that should continue. But none of it will be enough if the people using these systems imagine that governance can be reduced to compliance without public judgment. A society can have frameworks and still fail to think clearly about what its tools are doing to the world it shares.[1][2][3][4][5]

The missing word in the AI debate is judgment because judgment is the faculty that keeps every other word honest. It asks whether safety language corresponds to actual safety, whether transparency is intelligible to those subject to decisions, whether innovation is compatible with dignity, and whether a system that works for the organization is also justifiable in public. That is not a nostalgic demand for slower times. It is a practical demand for institutions wise enough to know that no matter how impressive the outputs become, the burden of judgment never leaves the humans who deploy them.[1][2][3][4][5]

Source notes

Primary documents, datasets, and institutional references used for this story.

- 1. NIST AI Resource Center, AI Resource Center.

- 2. National Institute of Standards and Technology, AI Risk Management Framework.

- 3. OECD, OECD AI Principles.

- 4. UNESCO, Recommendation on the Ethics of Artificial Intelligence.

- 5. Stanford Encyclopedia of Philosophy, Hannah Arendt.

Referenced documents

Corrections status

No corrections have been posted to this story as of April 6, 2026 • 3:02 p.m. EDT. For amendments after launch, use the corrections workflow linked in the footer.